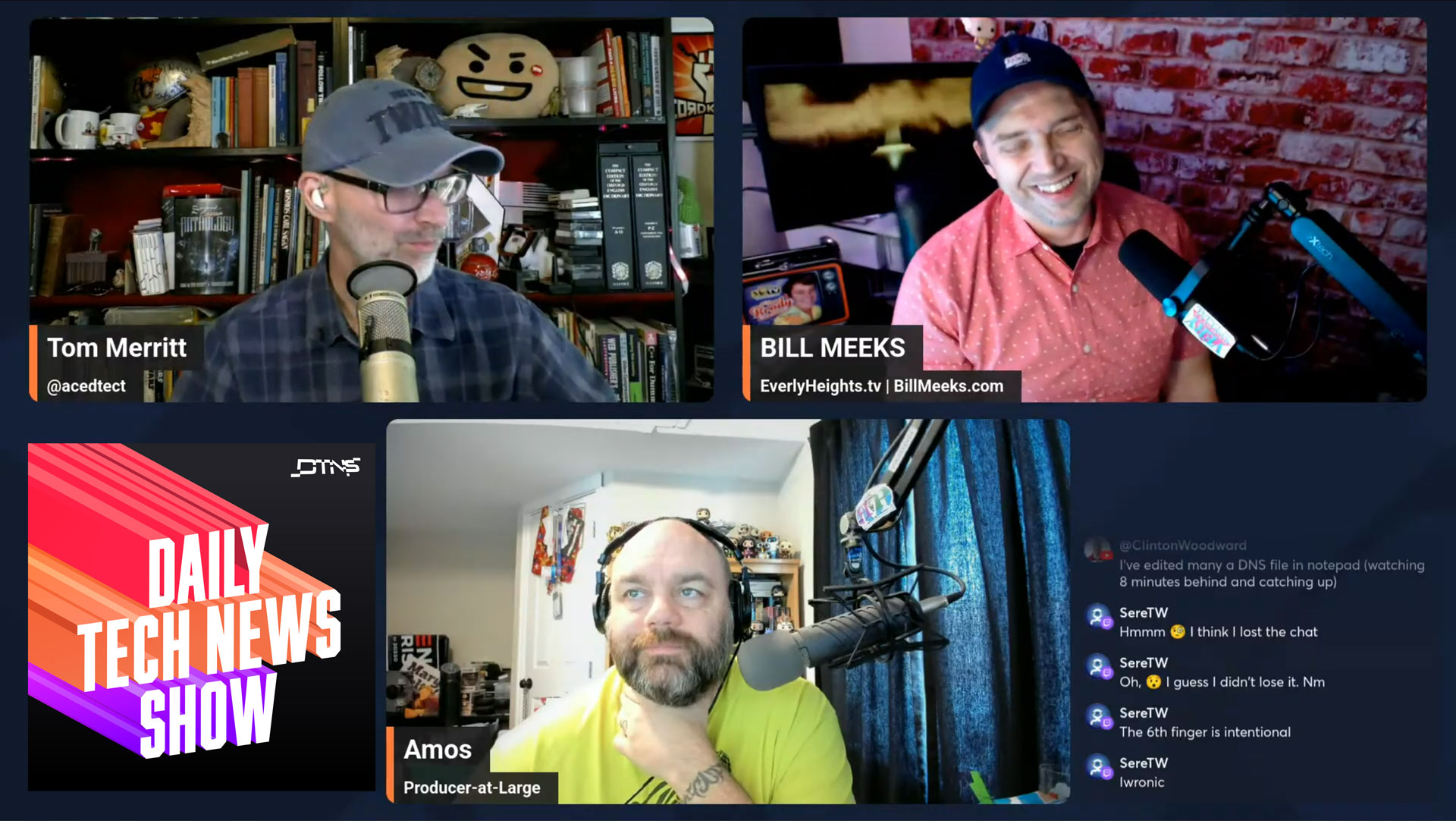

This week, I went on the Daily Tech News Show’s Friday Hangout to plug Everly Heights Tales and chat with my ol’ buddy Tom Merrit. We got into why I’ve moved the Everly Heights project I started in 2020 away from AI, creatively. If you don’t feel like watching the video, I’m including my perspective in human-written text below. You can enjoy the full conversation with Tom above, which gets into more detail about everything, or read the full post below for the Cliff Notes. Plus, now I have a link to send people as they discover my “AI past” and think I’m a slop-slinger.

Okay. Here goes:

For a couple years, I put my professional creative energy into harnessing generative AI as a professional tool in an actual storytelling pipeline, not just a gacha machine for pretty images or a way to make my buddy look doofy. I dug deep into my artistic and design skill sets to build custom Stable Diffusion models trained on my own art. This included models that created character turnarounds for animation, phoneme sets for lip-sync, background plates, and prop generators, all in a consistent artistic style. My style, since the models were all trained on my own artwork. It was never about replacing people. It was about making me quicker at creating so I could tell my stories. Plus, there was a lot of money flying around for projects like mine, so it gave me a budget.

I used that budget to hire actors, pay collaborators, and figure out a way for an indie creator to make an animated pilot like Very Special without compromising the story. But the culture turned faster than the craft. Even when the work was ethical, artisanal, and personal, the AI association alone started repelling people before they could set foot in Everly Heights. I’ve seen that movie. The Fakist “satirical fake news” concept flipped from of-the-moment when I wrote the pilot to “icky” by the time I published it six months later. Why do I do this? Who can say? So, I’ve spent the last 10 months pulling back on AI tech for Everly Heights. Not because the tools failed, but because my creative vision was losing articulation as it denoised across the cultural latent space. If you use Stable Diffusion, you get that joke.

The worst part about it? People started accusing me of not writing my own stories. For the record, while I now use LLMs to brainstorm and organize my thoughts in planning stages, I type out every script and story on the site and do countless revisions on them msyelf. The old fashioned way, even. I start with a legal pad and a pen as my primary tech when writing. If you ask the Cornerstone Theater Players about my scripts, those talented voice actions will assure you they contain many human errors since I turn them around so quickly… Mostly typos from when my dyslexia kicks in and I don’t catch it. They’ll also let you know I include a lot of improv in our recording sessions to let their human personalities shine through.

Yes, I still use AI quietly and surgically, human-first. Sometimes I use it to preserve a voice I can’t physically consistently sustain because I’m getting older, sometimes to honor a friend who didn’t get to help me on this project. For this season of Everly Heights Tales, I’m reclaiming authorship in the most literal way: drawing/designing all the cover art myself without AI, moving slower, and making damn sure no one mistakes my work, stories, or craft for slop. These are quite literally the stories of my life, and people thinking I’m churning them out in a slop factory is the last thing I want.